An innovative new tool called Nightshade AI Poison has the potential to completely change how humans communicate with AI systems. It can be used to trick AI systems into making incorrect judgments or predictions, but it can also be used to improve AI systems by exposing their weaknesses.

Nightshade AI poison has had a sharp increase in usage since its October 2023 launch; in just its first week, over 250,000 downloads were registered. This quick acceptance has resulted in extensive conversations on its possible advantages and responsible use, emphasizing the rising awareness of AI risks and the significance of reliable testing procedures.

But by making humans more aware of their weaknesses, it can also be utilized to strengthen the security of AI systems. These weaknesses can be found and fixed, strengthening AI systems’ defenses against breaches.

Table of Contents

What is Nightshade AI poison?

With the help of a new tool called Nightshade AI Poison, users may “poison” AI models by subtly altering pictures in ways that are invisible to the naked eye. The AI model learns to misclassify scenery and objects when it is trained with the poisoned photos.

Although this powerful tool is currently in the early stages of development, it has the potential to be an effective tool for assessing how strong AI models are and protecting them from violent assaults. Attempts to trick AI algorithms into generating inaccurate predictions are known as adversarial attacks.

For example, a researcher could use this powerful AI tool to create a poisoned image of a cat that looks like a dog to an AI model. When the poisoned image is fed to the AI model, the model learns to classify cats as dogs. This could lead to the AI model making incorrect predictions in the real world.

The tool can also be used to evaluate how resistant AI models are to malicious assaults. For example, an investigator may employ this tool to generate a corrupted image dataset, which would subsequently be supplied to the AI model. The AI model is said to be resistant to physical assaults if it can correctly identify the infected photos.

Why is it important to test the robustness of AI models?

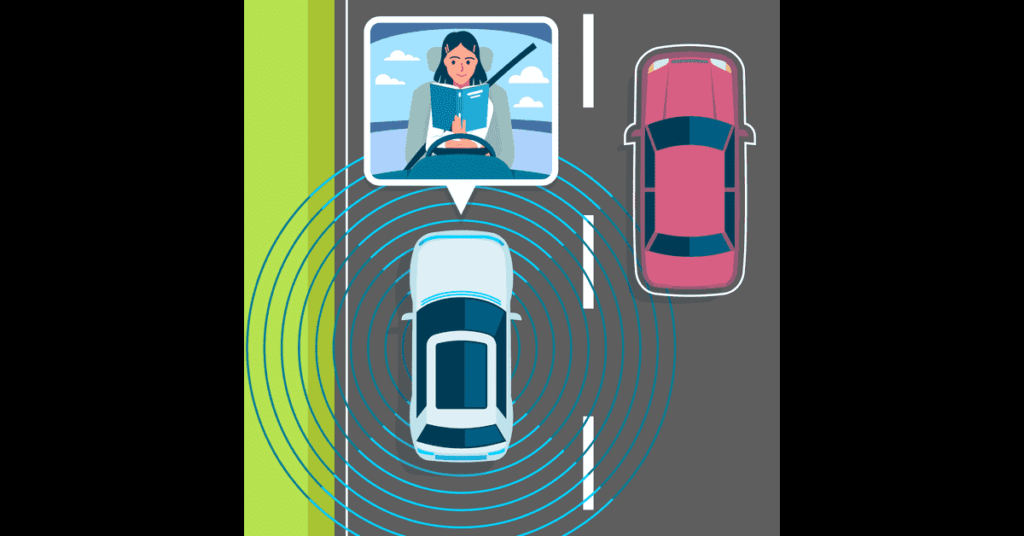

Critical applications like self-driving cars and medical diagnosis are increasingly using AI models. To make sure AI models can still produce correct predictions in the face of unexpected or unfriendly inputs, it is crucial to verify their adaptability. It can be used to create contaminated photos that can be used to evaluate how reliable AI models are.

Test AI robustness to ensure accuracy and safety. Nightshade AI poison can help.

How can this powerful tool be used to test the robustness of AI models?

A user would first need to create poisoned photos of the items that the AI model is taught to identify to employ Nightshade AI poison to evaluate the robustness of an AI model. It is possible to create poisoned images by quietly altering the image’s pixels. The AI model should be able to identify the changes, even if they should be invisible to human sight.

Here is an example of how Nightshade AI poison could be used to test the robustness of an AI model that is used to classify self-driving cars:

- A user could generate a poisoned image of a stop sign that looks like a speed limit sign to the AI model.

- The user could then feed the poisoned image to the AI model.

- If the AI model misclassifies the poisoned image as a speed limit sign, then it is not robust to adversarial attacks and could potentially cause a self-driving car to ignore a stop sign.

How does Nightshade AI poison work?

To cause AI models to incorrectly identify photos, this AI tool makes minute, undetectable modifications to the images. It is an effective method for evaluating AI model’s resilience and shielding them from hostile attacks.

Targeted adversarial perturbations (TAPs)

Targeted adversarial perturbations (TAPs) are small, imperceptible changes to images that can cause AI models to misclassify them in a specific way.

Numerous methods, like as optimization and machine learning, can be used to construct TAPs. Projected gradient descent (PGD) is a technique that is frequently used. PGD operates by gradually altering an image in a way that maximizes the probability that the AI model will incorrectly identify it.

Defending against TAP attacks

There are some techniques that can be used to defend against TAP attacks, including:

- Training AI models on adversarial data: AI models can be trained on adversarial data, which is data that has been augmented with TAPs. This helps the models learn how to resist TAP attacks.

- Defensive distillation: Defensive distillation is a technique that can be used to create more robust AI models. Defensive distillation works by training a new AI model on the outputs of another AI model that has been trained on adversarial data.

- Using input validation: Input validation can be used to detect and reject TAPs. For example, AI models can be trained to identify and reject images that have been augmented with TAPs.

- Using a technique known as targeted adversarial perturbations (TAPs), Nightshade modifies photos in a hardly visible way yet has the power to dramatically alter how AI models understand the images.

Examples of how this powerful AI tool has been used to fool AI models

It is a powerful tool that can be used to fool AI models in a variety of ways. Here are a few examples:

- Researchers at the University of Chicago used Nightshade AI poison to fool a popular image classification model into misclassifying images of cats as dogs.

- A team of security researchers used this AI tool to create a poisoned image that was able to fool a facial recognition system into unlocking a smartphone.

- Prevent artwork from being used without consent for AI training. A firm named Draggan is creating a technology that lets artists utilize Nightshade AI poison to prevent their creations from being used for AI model training without consent. This cutting-edge program gives artists the ability to keep control over their works despite the constantly changing digital environment.

Benefits of using Nightshade AI poison to test the robustness of AI models

Here are the benefits of using this impactful tool to test the robustness of AI models

Identify vulnerabilities in AI models:

Using the AI poison tool, one can produce poisoned images that can be used to target flaws in AI models. This can aid in locating and addressing these weaknesses before an attacker uses them.

Improve the accuracy and reliability of AI models:

The accuracy and dependability of AI models can be enhanced by using this tool to train them on opposed data. This is because AI models are forced to learn how to differentiate between true and tampered data using threatening data.

Protect AI models from adversarial attacks:

Defense distillation models can be developed using this powerful tool. Compared to conventional AI models, defensive distillation models are more resistant to attacks from rivals.

A useful tool for evaluating the resilience of AI models and enhancing their security and safety is Nightshade AI Poison.

Challenges of using Nightshade AI poison to test the robustness of AI models

This tool is an innovative kind of adversarial example created especially to go after AI models. It’s a kind of assault known as “data poisoning,” whereby an AI model’s training data is tampered with to reduce its accuracy.

Because the tool is so subtle, it can be especially difficult to identify. It adds little adjustments that can significantly affect the AI model’s performance rather than any glaring mistakes to the training set.

The limitations of nightshade are being aggressively addressed through ongoing study and development. These initiatives are concentrated on:

- Making the process of creating effective hostile attacks simpler: simplifying the process of creating powerful attacks so that a larger group of users can more easily utilize Nightshade’s capabilities.

- Staying ahead of emerging AI models: Scientists are looking for ways to make sure Nightshade’s techniques continue to work in the face of increasingly complex AI models.

This powerful AI tool is still under development

This indicates that before it can be used frequently, a few issues still need to be resolved and it is not yet perfect.

The fact that using this too can be challenging is one of its main problems. A thorough understanding of AI model functionality is necessary to develop Nightshade AI poison attacks that are successful. This implies that only a select group of researchers and engineers can now access this AI tool.

The fact that Nightshade AI poison assaults are always changing presents another difficulty. The tool attacks can be increasingly sophisticated as AI models do. To make sure that these AI tool attacks remain effective against the most recent AI models, they must be updated regularly.

It can be difficult to generate poisoned images that are effective at fooling AI models.

This AI tool could be used to develop malicious adversarial attacks

A hostile adversarial attack known as “nightshade AI poison” takes advantage of AI system’s weaknesses. It can be used to trick AI systems into making poor judgment calls or predictions, which could have detrimental effects. The utility could be used, for instance, to deceive an AI system into believing that a harmless image is dangerous or that a real person is a fraud.

While it poses a serious risk, it can also be an effective instrument for enhancing AI system security. We can create stronger defenses against this AI tool by understanding how it operates.

Quiz|Test your knowledge

Conclusion:

Summary of the main points:

- Nightshade AI poison is a powerful tool that can be used to fool AI systems into making wrong predictions or decisions.

- It can be used for malicious purposes, such as tricking an AI system into thinking that a benign image is a malicious one or that a legitimate user is an imposter. And can also be used for good, such as to improve the security of AI systems by helping us understand their vulnerabilities

Recommendations for how to use Nightshade AI poison responsibly:

- Nightshade AI poison should only be used by responsible individuals and organizations.

- It should not be used for malicious purposes, such as to harm or deceive others.

- It should be used with caution, as it can have unintended consequences.

Outlook for the future of Nightshade AI poison:

AI poison from Nightshade is a brand-new, quickly developing technology. It’s critical to use it carefully and to be informed of any possible risks or benefits. This AI tool is expected to grow in importance as AI systems get more sophisticated and potent, benefiting both attackers and defenders.

FAQ

What causes Nightshade AI poisoning and what is its nature?

A tool called Nightshade can be used to make subtle, invisible changes to digital photos. These “poisoned” photos can fool artificial intelligence models into producing inaccurate classifications or forecasts.

Can Nightshade be Applied Maliciously?

Yes, sadly, Nightshade can be abused to produce adversarial attacks that trick AI systems into producing inaccurate or dangerous results. It might be used, for instance, to fool facial recognition software or influence recommendations made by artificial intelligence.

Is nightshade also advantageous?

Without a doubt! Nightshade is essential for assessing the adaptability of AI models and for locating and fixing faults before bad actors take advantage of them. Training them on “adversarial data” that contains tainted instances, can also be utilized to create AI models that are more resilient.

In what ways does Nightshade provide ethical challenges?

Nightshade is widely used, which presents ethical questions because of its potential for misuse and the difficulty in restricting its spread. Encouraging conversations about the advantages and disadvantages of using it responsibly is essential.

What does Nightshade’s future hold?

It is anticipated that Nightshade will become more crucial to offensive and defensive tactics as AI develops. Its responsible use and potential risk reduction will depend on ongoing study and development.